As an AI Engineer building production systems, you’ve probably watched the steady increase in context-window sizes for large language models (LLMs) with more than a little excitement. Longer context windows promise fewer context-truncation problems, the ability to feed whole documents (or many of them) into the model at once, and more coherent multi-turn interactions. That progress begs a practical question for architects and builders of Artificial Intelligence systems: if LLMs can ingest massive amounts of context natively, are Retrieval-Augmented Generation (RAG) pipelines still necessary?

The short answer: Personally from me, Yes in most realistic production scenarios, RAG remains a crucial pattern. But the long answer is far richer. Below I unpack why RAG still matters, how wider context windows change the trade-offs, when you might be able to simplify or skip RAG, and concrete design choices an AI Engineer should consider when deciding whether to keep, modify, or retire RAG in a platform.

Quick refresher: what is a RAG and also what does larger context window mean?

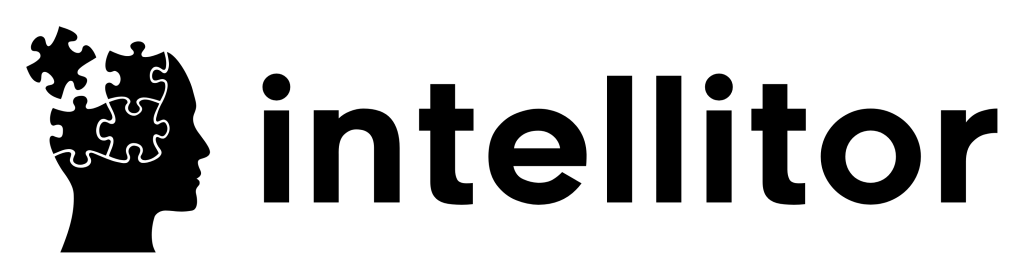

Retrieval-Augmented Generation (RAG) is a system design pattern that separates a knowledge retrieval step from an LLM generation step. The high-level flow:

Query → vector index or search over a knowledge base (documents, product manuals, customer logs).

Retrieve top-k relevant passages (often with embedding similarity).

Optionally filter or rank these passages.

Construct a prompt that includes the retrieved context + the user query.

Send to the LLM to generate the final answer.

RAG’s goals are clear: ground responses in verifiable facts, enable access to up-to-date or private data without retraining, reduce hallucinations, and control information flow for compliance and cost.

Context windows are the LLM’s token capacity for the prompt (and sometimes returned tokens). Recent models have expanded context from a few thousand tokens to hundreds of thousands (or more in research settings). Larger windows reduce the need for chopping documents into tiny chunks and allow models to consider more context simultaneously.

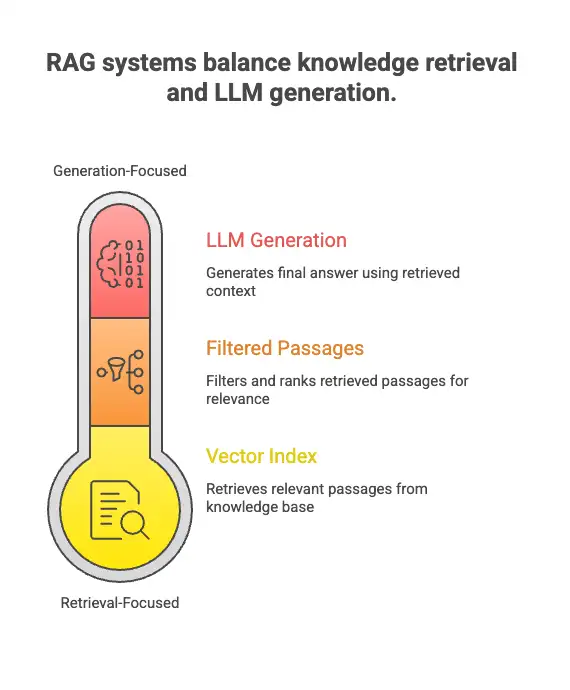

Why larger context windows are attractive (and what they change)

From an engineering perspective, bigger context windows bring clear advantages:

Less document chopping: You can feed whole long documents (e.g., a complete contract, an entire chapter, or a long support ticket thread) instead of heuristic chunking.

Better long-range coherence: The model can directly reason across parts of a document that used to be separated by chunk boundaries.

Simpler UX for multi-turn tasks: Sessions that must recall earlier long messages or logs can keep more of the conversation in model context.

Reduced retrieval latency for some flows: If you can include the whole knowledge source in the prompt, you eliminate the separate retrieval step and the associated I/O.

However, larger windows do not automatically solve the core challenges that motivated RAG. They change the trade-offs, but they don’t erase the fundamental concerns around cost, timeliness, hallucination control, compliance, and engineering complexity.

Core reasons RAG remains essential

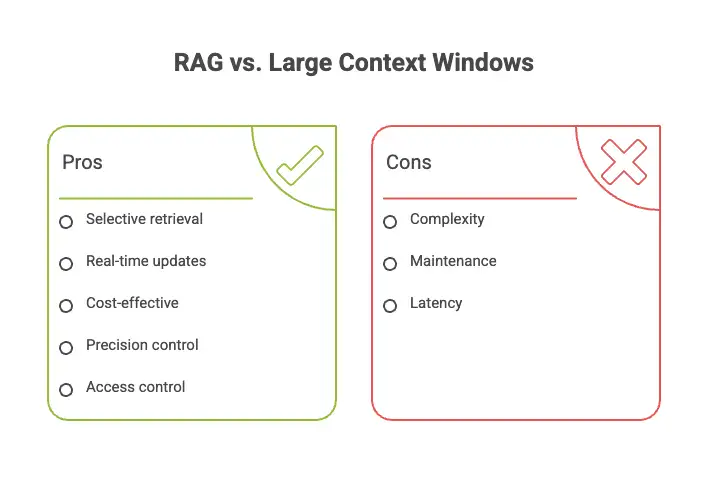

1. Scale of knowledge vs. token capacity

Even enormous context windows are finite. Large enterprises hold terabytes of documents. You can’t (and shouldn’t) pour everything into a single prompt. RAG allows selective retrieval, we provide the model with precisely the most relevant slices of knowledge. That principle is not broken by larger windows; it becomes more important as the scale grows.

2. Freshness and timeliness

A model’s internal knowledge (weights) is static unless retrained. RAG lets you attach live company data: product catalogs, legal changes, financials, or user records. Larger context windows don’t give you real-time updates, unless you keep feeding new documents into the prompt which is exactly what retrieval provides, but more efficiently.

3. Cost & latency

Tokens cost money. Feeding long documents every request can be significantly more expensive than retrieving a small set of salient passages. Also, encoding and decoding huge prompts increases latency. A smart RAG pipeline minimizes tokens sent to the model by returning only high-value context.

4. Precision & hallucination control

RAG can enforce explicit grounding: include citations, passage references, and provenance metadata so the model’s output can be traced back to a source. This improves factuality and regulatory compliance. Throwing megabytes of text into the prompt without structure can make it harder to verify where the model’s facts came from.

5. Access control and privacy

Not all documents should be exposed to every query. RAG enables access control at retrieval-time: role-based retrieval, redaction, or selective indexing. A broad prompt that includes many documents risks accidental leakage.

6. Indexing & semantic search benefits

Embedding-based indexes give you vectorized search capabilities: semantic retrieval, approximate nearest neighbors (ANN), and re-ranking. These are valuable features on their own (faceted search, clustering, similarity search) regardless of model context size.

7. Operational and engineering considerations

RAG splits responsibilities into discrete services: indexers, retrievers, rankers, and the LLM. This separation simplifies monitoring, debugging, and observability. If a model misbehaves, you can inspect retrieved passages and see whether the input was faulty — much harder if everything is opaque in a monolithic prompt.

When larger context windows can reduce reliance on RAG

That said, there are scenarios where large windows reduce the need for an elaborate RAG pipeline:

Single-source, short knowledge domains: If your product’s knowledge is small (a 50–200KB manual) and rarely changes, feeding it directly may be faster and simpler.

Ad-hoc research where latency/cost are acceptable: For one-off exploratory tasks, it’s okay to include larger documents and pay extra tokens.

Privacy-insular tools with small corpora: If all relevant content is personal and small enough, embedding it into the prompt (with proper redaction) can be acceptable.

Prototyping: Early experiments and demos can skip RAG to iterate faster. But productionizing such prototypes without RAG often leads to technical debt.

Even in these cases, many organizations still prefer a minimal retrieval layer: a simple keyword search or small-embedding retrieval to avoid redundant token usage.

New hybrid patterns enabled by big context windows

The interaction between larger windows and RAG isn’t binary. Several hybrid patterns are emerging:

Long-context RAG: Retrieve a small set of highly relevant documents but include full-length documents (not chunks) in the prompt because the window permits it. This reduces chunk boundary issues while preserving retrieval precision.

Chunk + global context: Keep a background “global context” (policy, company style guide) in the prompt, and retrieve per-query passages to augment that. Global context fits comfortably in larger windows and improves consistent behavior.

Hierarchical RAG: Use coarse retrieval to select documents and then use a second-level retrieval/reranker or local summarizer to condense content into the prompt. Larger windows let you do more in the second level for example, include entire sections for richer prompting.

On-the-fly summarization: For very long retrieved documents, run an LLM summarizer to produce a concise, high-fidelity summary which is then included in the final prompt. Large windows reduce the need for aggressive summarization but don’t eliminate it.

Sliding-window session memory + RAG: Use the LLM’s long context to hold session memory (user preferences, recent conversation) and use RAG for factual grounding (knowledge base), combining both for richer interactions.

Cost, performance, and safety trade-offs: practical considerations for engineers

As an AI Engineer, you must weigh costs, latency, and risk. Here’s a checklist of practical trade-offs:

Token cost vs. compute cost: Longer contexts increase per-request token costs and may increase latency. RAG’s retrieval/ANN costs are CPU/IO-bound but can be amortized across queries and cached.

Caching: RAG enables efficient caching at the passage level. If a high-volume query repeats, you can reuse retrieval and even precompute answers.

Observability: RAG gives you concrete artifacts (retrieved passages) to monitor. This helps debug hallucinations and measure relevance.

Provenance & audit: For compliance, you might need traceable evidence, a RAG system can store retrieval fingerprints and allow audits.

Deployment complexity: RAG requires an index, embedding pipeline, retriever service, and occasionally a re-ranker. That’s more moving parts than a direct-prompt approach.

Security: Storing embeddings of sensitive documents requires encryption and strict access controls. Consider encryption-at-rest and role-based retrieval.

Architecting a decision: when to keep RAG, and when to simplify

Here’s a pragmatic decision flow an AI Engineer can use:

How large is your knowledge base?

Small (< few MB) and static → consider direct-prompting, but measure token costs.

Medium to large → prefer RAG.

Does the knowledge change frequently?

High churn (daily/real-time updates) → RAG is almost mandatory.

Do you need provenance or regulatory traceability?

Yes → RAG with citations and stored retrieval logs.

Is cost/latency critical?

Yes → RAG with aggressive passage filtering and caching.

Is the user experience highly conversational with session memory?

Use long context windows for session memory + RAG for knowledge grounding.

How important is explainability?

High → RAG.

If you answer “yes” to any of the last four, RAG remains the right choice.

Implementation tips and best practices for modern RAG systems

If you decide to keep or adopt RAG, here are practical tactics that leverage larger context windows without discarding retrieval:

Use embeddings wisely

Choose an embedding model that aligns semantically with your domain (general vs. domain-tuned). Regularly re-embed on major content updates.

Combine semantic + lexical search

Hybrid search (embedding + BM25) captures both semantic similarity and exact matches. This reduces missed-relevant-passage risk.

Store passage metadata

Include doc id, section headers, timestamps, author, and access controls in stored metadata. Use them for provenance and access decisions.

Rerank after retrieval

Lightweight cross-encoders or a cheap LLM reranker can reorder initial ANN results to surface the best passages.

Limit tokens intelligently

Even with large windows, keep a hard token budget per request. Prioritize the most relevant passages and prefer high signal/noise ratios.

Use summarization strategically

For very long documents, generate an abstractive or extractive summary and include that alongside full passages when the window allows.

Cite sources in outputs

Always return the minimal set of citations to the user (document IDs, paragraph references). This supports trust and debugging.

Monitoring and metrics

Track retrieval precision@k, grounding rate (percentage of answers that cite a retrieved source), latency, token counts, and end-user satisfaction.

Privacy & governance

Treat embeddings and indexes as sensitive assets. Use encryption and role-based retrieval. Implement deletion workflows for right-to-be-forgotten requests.

Graceful fallbacks

If retrieval returns poor matches, design fallback behaviors: ask clarification, escalate to human-in-the-loop, or run a broadened search.

Example scenarios: when RAG shines (realistic cases)

Enterprise knowledge assistants: Employee asks about contract clauses across thousands of contracts. RAG finds the exact contract and clause; large windows let you feed a complete contract for better interpretation.

Customer support: A support agent needs product logs and release notes pulled together for debugging. RAG enables precise retrieval and attaches timestamps and versions.

Regulated domains (healthcare, legal, finance): Outputs must be grounded in up-to-date policies and cite sources. RAG provides the required traceability.

Personalized assistants: Combine session memory in the long context with RAG for product knowledge, delivering personalized and grounded answers.

When to experiment with RAG-lite(Lighter version of RAG) or simplifications

If your application is resource-constrained or you want to minimize complexity, try these lighter options:

Document embeddings + short-context prompting: Keep a small top-k retrieval and include only a few passages.

Cached Q&A: Precompute answers for frequent queries and serve them directly.

On-device or edge indexing: For privacy-heavy apps, use local retrieve-and-infer without touching cloud LLMs.

These reduce operational burden while preserving many benefits of RAG.

The role of the AI Engineer: strategy, not just implementation

For an AI Engineer, the decision to use RAG is both technical and product-driven. Your responsibilities include:

Designing tests to compare hallucination rates with and without RAG.

Measuring token costs and latency under realistic traffic.

Building observability around retrieval quality.

Coordinating with legal/compliance on provenance and data retention.

Communicating trade-offs to stakeholders (product managers, security, and business teams).

RAG isn’t just an engineering pattern, it’s an organizational control point that balances capability, cost, and risk.

Final thoughts and a pragmatic conclusion

Advances in context windows are transformative: they simplify prompt design, reduce chunking artifacts, and enable richer conversational memory. But they are not a replacement for RAG. RAG remains a foundational pattern for grounding, freshness, cost control, provenance, and enterprise-grade access control. The future is hybrid: AI Engineers will combine long-context windows with smarter retrieval, hybrid search algorithms, and principled prompt engineering.

If I had to summarize advice in one line for fellow AI Engineer readers: use context windows to improve user-facing conversational memory and reasoning, but keep RAG as the canonical way to provide up-to-date, auditable, and cost-efficient knowledge grounding in production systems.